An alert flashes on your screen. Is it real, or just noise? The standard SOC analyst alert investigation steps is a repeatable process. It’s how you transform raw data from your SIEM and EDR tools into a clear security decision: close, escalate, or respond.

This workflow isn’t about chasing ghosts, it’s about building a defensible process that prevents fatigue and catches real threats. We use it every day to turn chaos into controlled action. Keep reading to learn the exact steps that separate a frantic reaction from a professional response.

Critical Steps Every SOC Analyst Should Follow

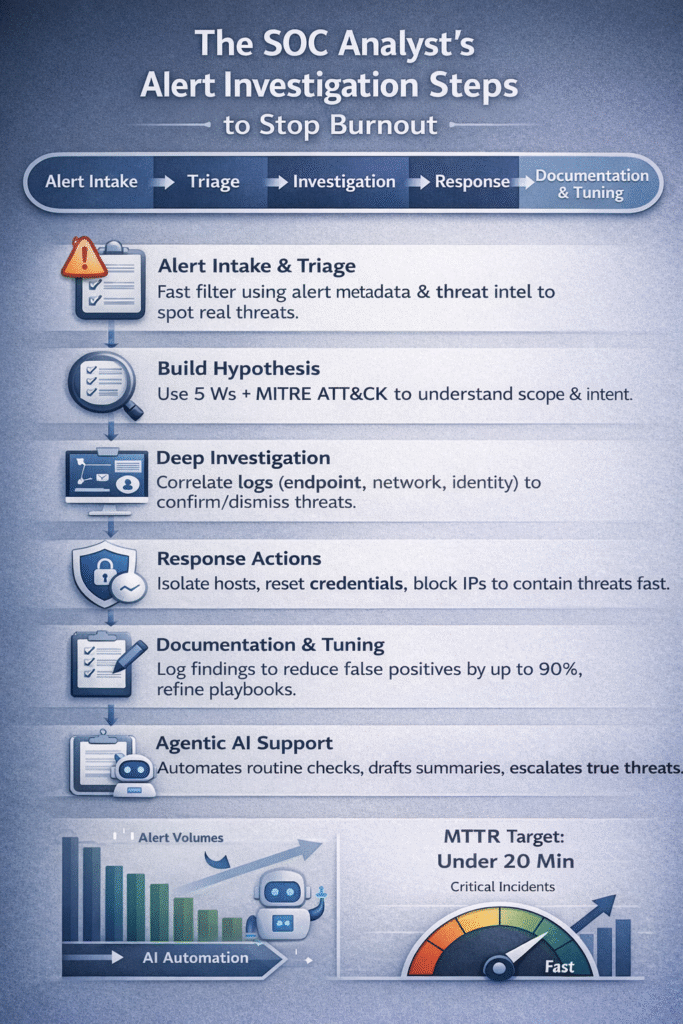

- Triage First, Investigate Second: Quickly filter noise using alert metadata and threat intelligence to preserve focus for real threats.

- Build a Hypothesis: Use a structured framework like the 5 Ws and map evidence to the MITRE ATT&CK framework to understand scope and adversary intent.

- Document for the Future: Every investigation must feed into tuning detection rules and updating playbooks to continuously improve your security posture.

What is the Standard SOC Alert Investigation Workflow?

The process is a cycle. It starts when a monitoring system pings. It ends with a smarter security system. You’re not just closing tickets, you’re building institutional knowledge.

First, an alert is ingested. It comes from a SIEM rule, an EDR detection, or a cloud security finding. It lands in the queue. Next, triage happens. This is the initial sorting. Analysts look at severity, asset value, and known indicators. Many alerts end here, marked as benign.

“Almost everything in a Tier 1 SOC can be summarized like this: Alert → Triage → Investigation → Response → Documentation. […] Investigation is about building a timeline using logs and evidence. The goal is always the same. Confirm or dismiss malicious activity.” – Dylan Heywood

For the rest, the deep dive begins. Logs are correlated across endpoints, network, and identity. A story is pieced together.

Finally, a decision is made. Is it a false positive, suspicious, or a true incident? The outcome dictates the response. The entire loop is documented. This record tunes future alerts. It’s a system that learns from itself.

- Core Stages: Intake, Triage, Validation, Deep Investigation, Classification, Response, Documentation & Tuning.

How Do Analysts Perform Initial Alert Triage and Validation?

Credits: MyDFIR

This is the gatekeeping stage, where we filter the data river to find what matters. Since almost half of security pros feel alert fatigue, refining your incident investigation and analysis steps is the only way to ensure good triage remains the primary fix.

We begin with an alert’s metadata: timestamps, IP addresses, user accounts, file hashes. Then we add context. Is this user an admin? Is the IP internal? Was it during a maintenance window? We check threat intelligence feeds or VirusTotal. The core question is: does this activity have a legitimate business reason?

If yes, we close it and note why. That data helps tune the system. If no, or unclear, we escalate. We assign a severity and own the ticket. This process must be fast and repeatable, it’s a checklist, not a hunch. The triage playbooks we build for MSSPs come from thousands of these decisions, cutting through the noise.

What Steps Are Involved in Deep Investigation and Evidence Collection?

In our work with MSSPs, a professional security incident investigation process always starts with a working hypothesis. The alert is just the opening clue; the real task is building the full narrative. Experienced analysts don’t guess, they structure the hunt. We typically lean on the 5 Ws to keep investigations grounded and repeatable.

“The triage phase concludes with one of three outcomes: close the alert as benign, escalate directly to incident response, or move to investigation for deeper analysis. […] This stage involves pulling additional data from integrated security tools, correlating events across multiple sources, and conducting deeper analysis of indicators of compromise.” – Expel

- Who: Identify the user, service account, or host involved.

- What: Confirm the exact activity, process launch, registry edit, or data movement.

- When/Where: Place the event in sequence across endpoints, network, and cloud.

- Why: Map behavior to MITRE ATT&CK to judge adversary intent.

From there, our teams pivot hard. We’ve seen many MSSPs miss lateral movement simply because they stopped at the original alert. Strong investigators expand outward, correlating EDR process trees, firewall flows, and authentication logs, until the evidence clearly proves or disproves the hypothesis.

How Are Confirmed Threats Contained and Remediated?

A true positive is a call to action. Speed is critical, especially when digital evidence collection and analysis must happen simultaneously to limit damage. This is where pre-defined playbooks turn analysis into action.

You don’t debate what to do. You execute. For an endpoint, that often means isolation. Network access is cut to prevent lateral movement. Malicious files are quarantined. For a compromised account, credentials are reset and sessions are revoked. At the network edge, malicious IPs and domains are blocked. This severs command and control channels.

| Response Action | Security Impact |

| Host Isolation | Prevents Lateral Movement across the network |

| Credential Reset | Terminates unauthorized access for a compromised identity |

| IP/Domain Blocking | Severs live Command and Control (C2) communication |

The metric that matters here is Mean Time to Remediate (MTTR). Top teams aim for under 20 minutes for critical incidents. Coordination is key. You notify the affected system owner, you document every action taken. The response must be swift, precise, and documented.

Why Are Documentation and “Lessons Learned” Critical for SOC Maturity?

The case isn’t closed when the threat is contained. It’s closed when you’ve learned from it. Documentation creates a feedback loop that makes your entire SOC smarter.

You write a summary. What happened, what was affected, the timeline. You detail the root cause. Was it a phishing email, an unpatched vulnerability, a misconfiguration? You list every action taken. This isn’t bureaucracy, it’s your knowledge base. This record is used for compliance, for audits, and most importantly, for tuning.

You take those findings back to your detection engineering team. That noisy rule that gave five false positives last week? You adjust its logic. You might reduce false positives by up to 90% over time.

You update your threat intelligence feeds with new indicators you found. You refine the playbook for next time. This step transforms a one-time incident into lasting security improvement. It’s how you build a resilient posture.

How Does Agentic AI Transform the Investigation Lifecycle?

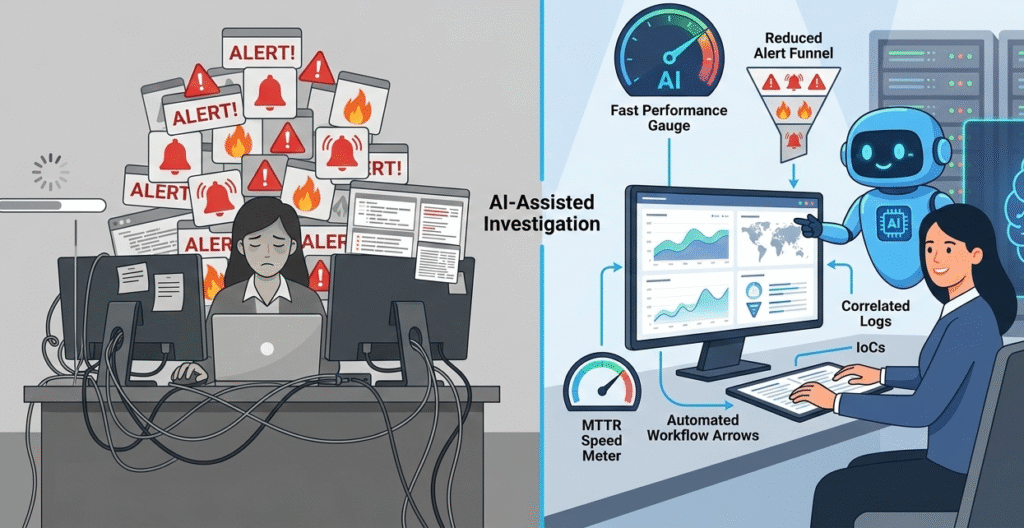

Across the MSSPs we work with, alert volume is already past the breaking point. Seeing 10,000+ alerts a day isn’t unusual, and teams that rely on fully manual triage burn out fast. That’s where agentic AI starts to earn its place.

In mature environments we’ve audited, the AI handles the first pass of investigation. It validates IoCs, correlates baseline telemetry, and produces a short case summary before an analyst ever opens the ticket. Most importantly, it closes the obvious noise and forwards only the alerts that deserve human attention.

- Auto-validates IoCs against threat intel

- Correlates logs across SIEM and EDR

- Drafts investigation summaries for analysts

- Escalates only high-confidence threats

We’ve seen this shift cut Mean Time to Conclusion from roughly 40 minutes to under 11. The analyst isn’t pushed out, they’re pulled up the value chain, focusing on complex cases and proactive hunting while automation handles the routine grind.

FAQ

How do SOC analyst alert investigation steps improve alert triage accuracy?

Strong soc analyst alert investigation steps bring structure to alert triage and reduce guesswork. When SOC teams follow a consistent alert triage workflow, they validate context faster and filter noise earlier.

This improves threat detection quality and helps security teams focus on real risks instead of chasing false positives across busy monitoring systems and security operations queues.

What role does threat intelligence play in SOC analyst alert investigation steps?

Threat intelligence gives SOC analysts critical context during investigations. By checking Indicators of Compromise against threat intelligence feeds and threat intelligence platforms, teams quickly identify known malicious IPs and active campaigns.

This step strengthens alert validation, improves incident response decisions, and helps security systems stay aligned with the evolving threat landscape.

How can Artificial Intelligence support SOC analyst alert investigation steps?

Artificial Intelligence helps automate repetitive work inside modern SOC alerts workflows. AI models can assist with alert categorization, automated analysis, and incident summaries before analysts step in.

Used carefully, this reduces alert fatigue, improves response times, and supports security teams without replacing human judgment in high-risk cyber security investigations.

Which security tools matter most for efficient alert investigation workflows?

Effective investigations rely on well-integrated security tools. SIEM platforms, EDR tools, XDR platforms, and network monitoring systems provide the visibility analysts need.

When combined with intrusion detection systems and identity platforms, SOC teams can correlate network traffic, user behavior, and endpoint activity to strengthen threat detection and overall cybersecurity posture.

Building Your Investigative Rhythm

Following the steps gives you a blueprint, but building an investigative rhythm takes practice. It’s the difference between reading a recipe and knowing how to cook. You start by sticking to the process: intake, triage, investigate, respond, document. Over time, it becomes automatic. You begin to see patterns in the noise and connections a checklist misses.

The real goal is to close alerts faster with each incident, make your security smarter, and free your team to focus on complex threats. This workflow is your foundation. Build it, refine it, and let it do the heavy lifting.

Want to make this process bulletproof? Let’s talk about transforming your alert management.

References

- https://medium.com/@dylanh122022/the-tier-1-soc-workflow-explained-alert-to-response-17fc2c6fc73f

- https://expel.com/cyberspeak/what-does-the-soc-alert-lifecycle-look-like/