It’s a strange time in security operations. The machines have gotten smart, fast enough to see patterns humans miss and respond in seconds. An AI agent can now handle what a human analyst does in a year, in one day.

But here’s the rub, most of those powerful agents are stuck in pilot programs, gathering dust. The gap between capability and real world use isn’t about technology anymore. It’s about trust, control, and a framework to manage these new digital colleagues.

If you’re running a SOC, the next leap isn’t buying a new platform. It’s building the governance to safely use it. Keep reading to understand the data shaping this shift and what you must do next.

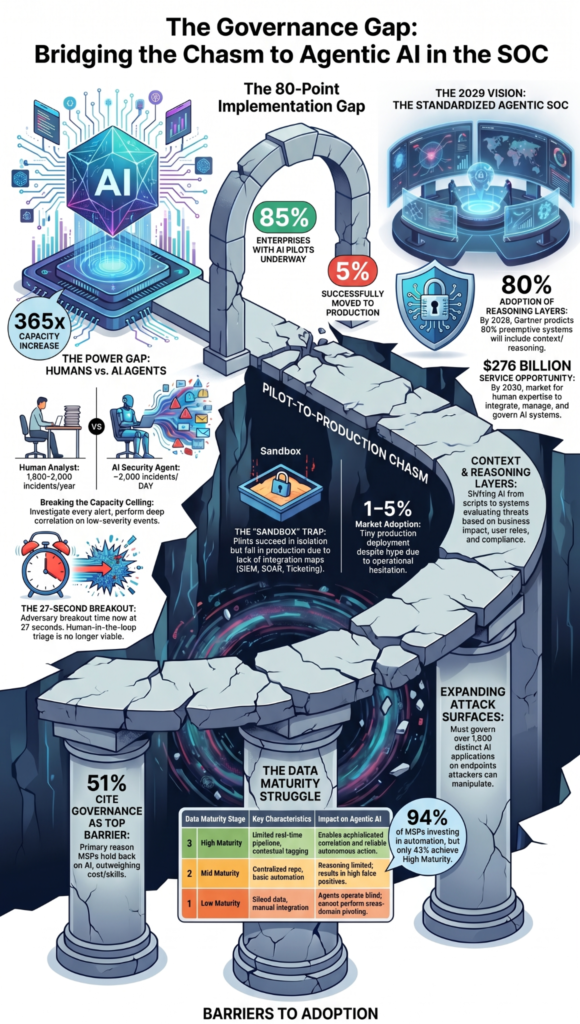

Key Statistics on Agentic AI & SOC Automation

The numbers tell a clear story of explosive potential hindered by human-scale problems. Adoption is low, threats are moving faster, and the market is poised for massive growth, all while a critical governance gap remains wide open.

- 2,000/day (AI) vs. 1,800-2,000/year (human) – An AI security agent possesses a raw processing capacity roughly 365 times greater than a single human analyst. This defines the new scale of operations.

- 1-5% adoption – Despite the hype, actual production deployment of AI agents across the target SOC market is minuscule. The technology is proven, but implementation is nascent.

- 85% piloting, 5% production – A staggering 80-point gap exists between experimentation and live use. Enterprises are testing, but overwhelmingly failing to operationalize.

- 27 seconds / 29 minutes – The adversary’s speed is accelerating dramatically. The fastest recorded breakout is now under half a minute, compressing the entire response timeline.

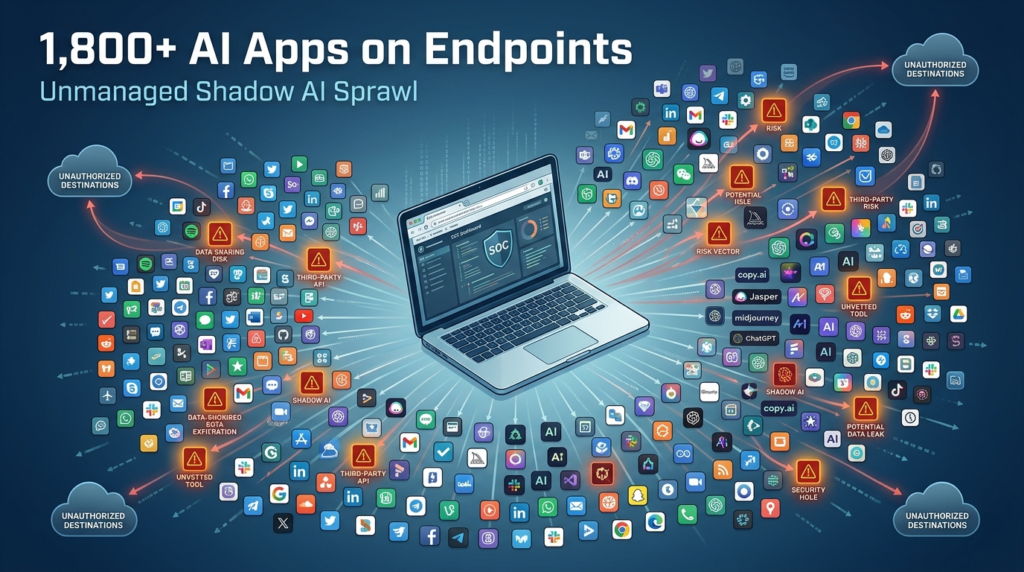

- 1,800+ AI apps – The enterprise environment itself is becoming “agentic.” CrowdStrike observes a proliferation of AI applications on endpoints, creating a new, complex attack surface.

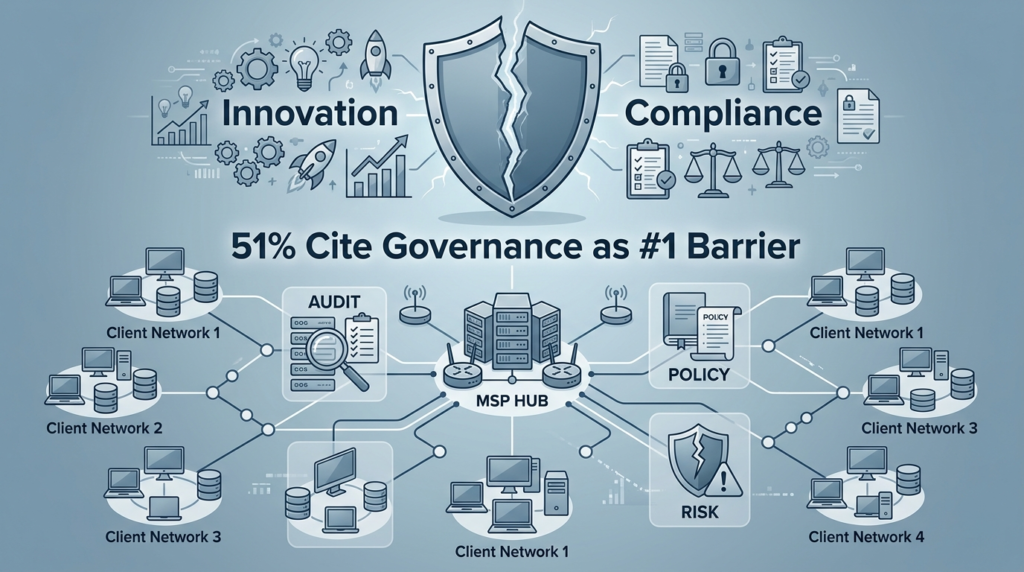

- 51% – For Managed Service Providers, governance and compliance concerns are the single largest barrier to adopting AI, outweighing cost, skills, or data issues.

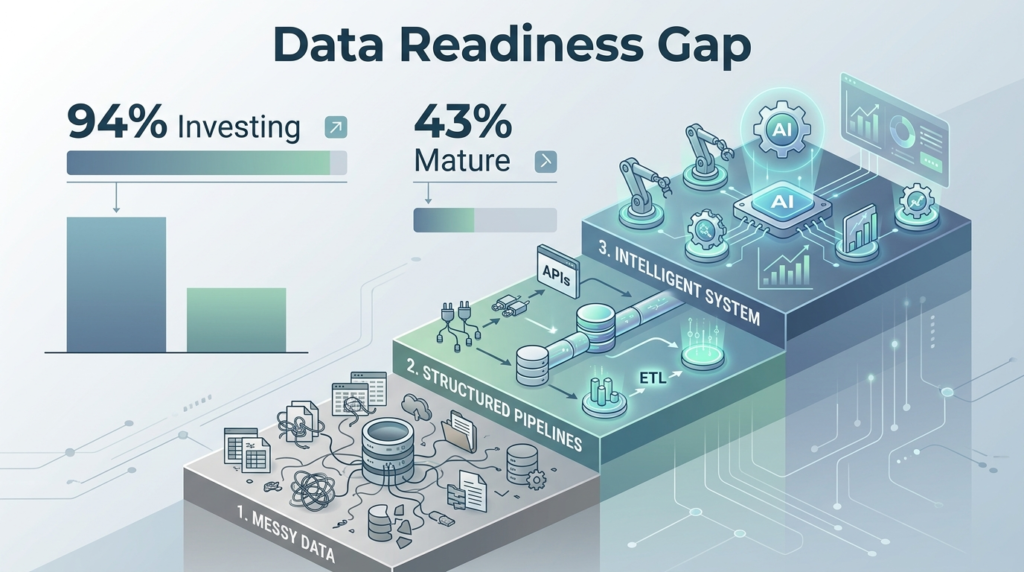

- 94% investing, 43% high maturity – Most MSPs are spending on automation to prepare for AI, but fewer than half have achieved a sophisticated, mature data environment to support it.

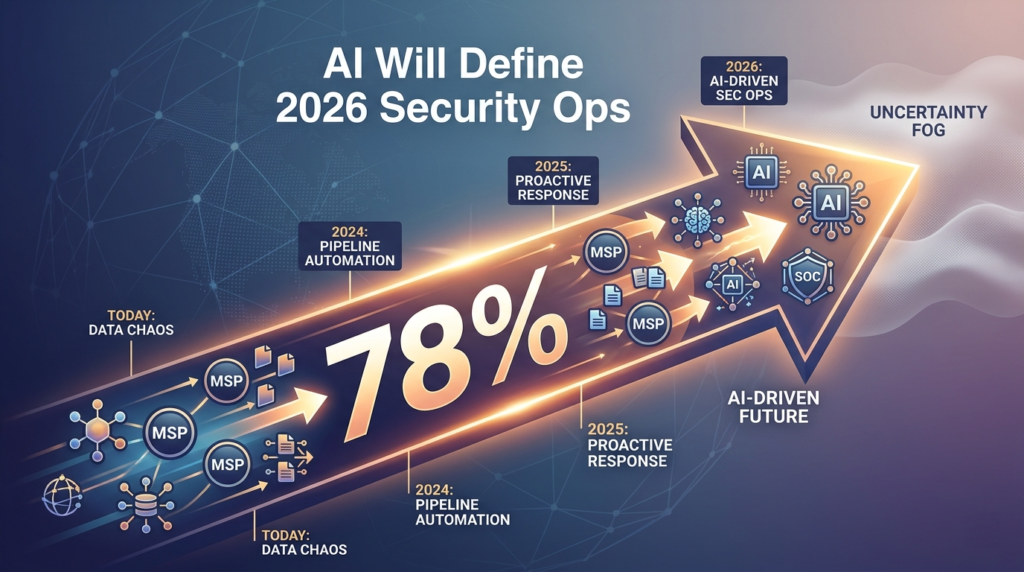

- 78% – A strong majority of service providers believe AI-driven operations will fundamentally reshape the security industry within the current year.

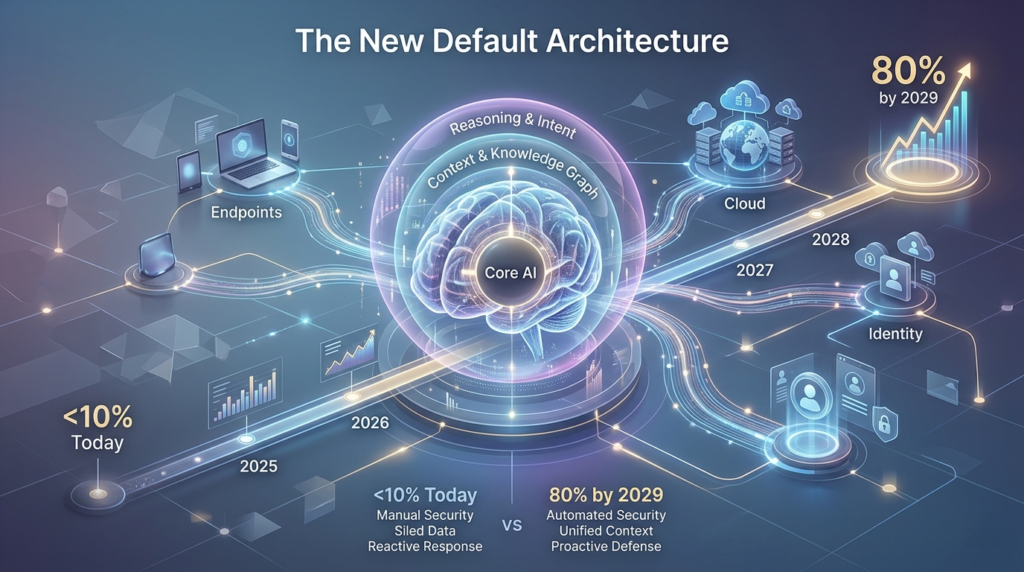

- 80% by 2029 vs. <10% today – Gartner predicts a near-total flip in the architecture of preemptive systems. Context and reasoning layers, the core of agentic AI, will become standard.

- 22.8% CAGR – The financial market for AI-enabled cybersecurity is projected to grow at a compound annual rate of over 22%, expanding from $38 billion to nearly $200 billion in eight years.

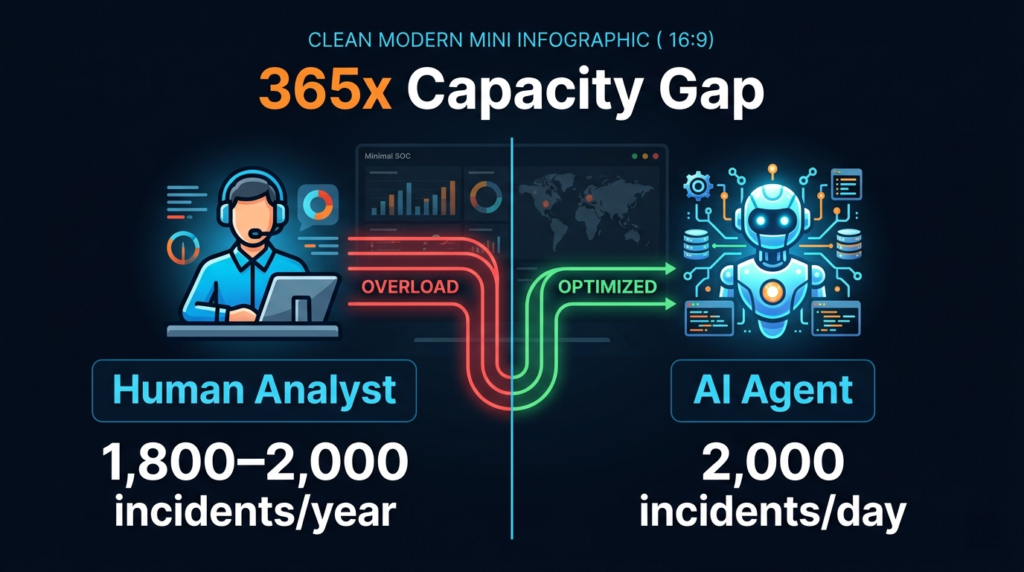

The 365x Capacity Gap: 2,000 Incidents Per Day vs. Per Year

That number, 2,000, hangs in the air. It’s not just bigger, it’s from a different universe of scale. According to data cited in the Gartner Emerging Tech: AI Vendor Race report (April 2026), a human analyst might process between 1,800 and 2,000 serious incidents in a year, whereas an AI agent can tackle that same volume in a single day.

This isn’t about working harder; it’s a fundamental shift in capacity. Instead of merely reacting, a SOC with agentic AI can investigate everything, turning data volume into an asset rather than a burden.

The implication is brutal for traditional SOCs. Alert fatigue wasn’t just an annoyance, it was a capacity ceiling. Humans have a biological limit for focused analysis. Machines don’t. This capacity allows for a different strategy entirely.

Instead of merely reacting to the highest-priority alerts, a SOC with agentic AI can afford to investigate everything. It can perform deep correlation on lower-severity events that would previously be ignored, potentially uncovering stealthy campaigns. The volume of data becomes an asset, not a burden.

But this power requires a new kind of oversight. You wouldn’t give a human analyst carte blanche to execute any action on the network. The same logic applies, magnified, to an agent with 365 times the capacity. The governance framework needs to define its authority, its scope of action, and its decision-making logic. Without that, its power is just a risk.

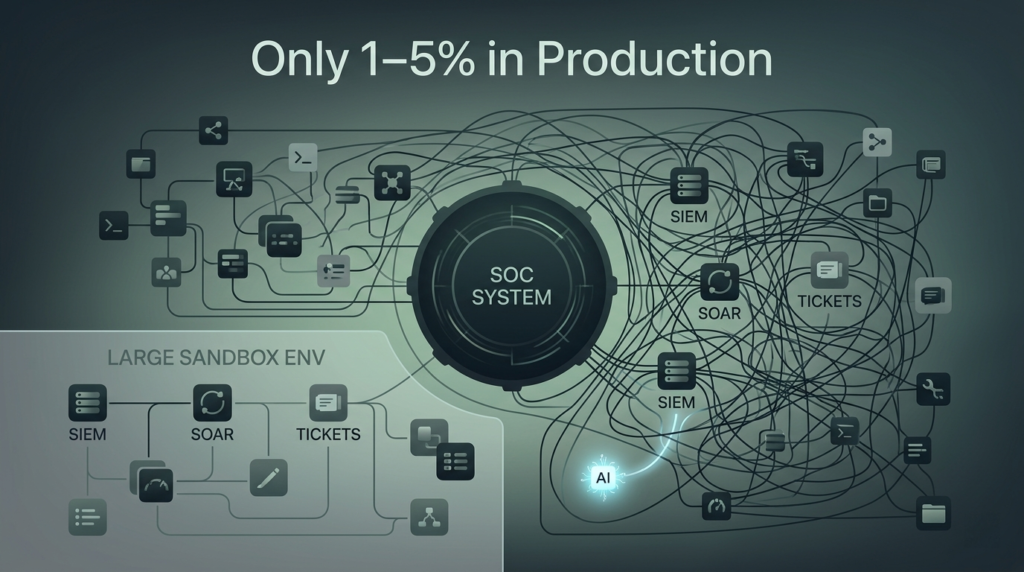

Only 1–5% of the Target SOC Market Has Deployed AI Agents

The chatter at every conference is about AI, yet the reality is stark. Industry data and analysis from Arctic Wolf (April 2026) reveal that the adoption rate of AI agents in production environments sits between a mere one and five percent.

This is not a failure of technology, but a failure of operational translation. Organizations are hesitant to introduce such a powerful, autonomous “animal” into their fragile, complex ecosystem of SIEMs and compliance rules without a clear map for integration.

Think of it like introducing a new, incredibly powerful animal into a delicate ecosystem. The SOC is that ecosystem. It has processes, people, tools, and a fragile balance. Throwing in a super-intelligent, autonomous agent without understanding how it will interact with every other component is a recipe for chaos.

Most organizations look at that complexity and pause. They run a pilot in a safe, isolated sandbox. They see it work. Then they stare at the tangled web of their production SIEM, SOAR, ticketing system, and compliance rules, and they stall.

The pattern is consistent across most enterprises:

- Pilot success doesn’t equal production readiness — controlled environments remove risk, but also remove reality

- Integration complexity slows momentum — stitching AI into SIEM, SOAR, and ticketing systems exposes hidden dependencies

- Governance uncertainty creates hesitation — unclear accountability, auditability, and compliance boundaries block deployment

- Risk perception outweighs capability — the downside of a wrong autonomous action feels greater than the upside of speed

- Operational ownership is undefined — no clear team “owns” the agent once it moves beyond experimentation

The gap between the sandbox and the live network is where the real work lies. It’s not a technical gap, it’s an operational and governance gap. This low adoption number is the clearest market signal that the problem has shifted.

The question is no longer “Can the AI do the job?” It’s “Can we manage the AI doing the job?”

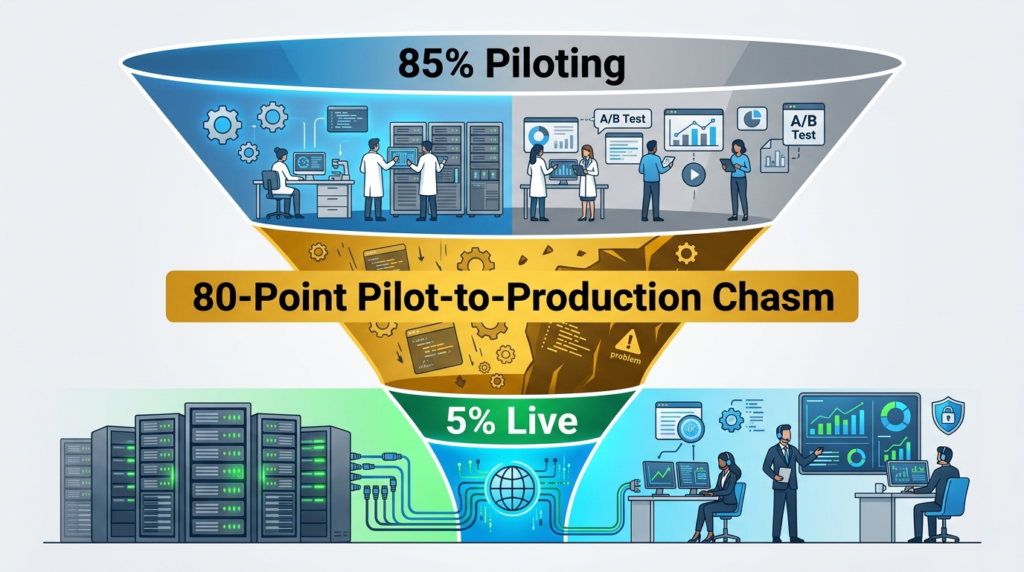

The 80-Point Pilot-to-Production Chasm: 85% Piloting, 5% Live

There is a massive disconnect in how companies approach AI implementation. As highlighted by Cisco’s Jeetu Patel at RSAC 2026 (March 2026), while 85% of enterprise customers have AI agent pilots underway, only 5% have successfully moved them into production. That’s an eighty percentage point chasm. What’s happening in that vast space between trying and doing?

Pilots are safe. They’re often run on historical data, or in a mirrored environment with no real consequences. They prove the AI can find threats. Moving to production means letting the AI act on real data, affecting real systems, and potentially making real mistakes that have real costs. The jump requires a level of confidence that most organizations haven’t built.

That confidence comes from answers to questions pilots don’t address: What is the agent’s “normal” behavior? How do we audit its decisions? Who is accountable if its autonomous response causes a business interruption? How do we ensure its actions remain compliant with industry regulations? The pilot shows capability. Production requires control. Until organizations solve for control, the agents remain in their test cages, no matter how powerful they are.

The 27-Second Window: Adversary Breakout Time Compression

CrowdStrike’s keynote delivered a chilling metric. The fastest recorded adversary breakout time, the moment from initial compromise to moving laterally within a network, is now 27 seconds. The average has dropped to 29 minutes. This isn’t just a trend, it’s a phase change. The threat environment has become a hyper-speed game.

Human response cannot operate on a 27-second clock. Even a 29-minute average is untenable for a SOC relying on manual triage and escalation. This compression is the single strongest argument for autonomous threat detection and response. The machine doesn’t need to wake up, get coffee, and open a ticket. It’s already watching, and it can act within the same timeframe the attacker is using.

What this compression changes inside the SOC is immediate and structural:

- Response time becomes the primary control plane — speed is no longer an advantage, it’s the baseline

- Manual triage collapses under pressure — human-in-the-loop models cannot keep pace with sub-minute threats

- Automation shifts from support to necessity — autonomous detection and response become foundational, not optional

- False positives carry higher consequences — mistakes executed at machine speed scale impact instantly

- Decision quality must match decision speed — fast actions without context-aware reasoning create new risks

This puts immense pressure on the design of AI triage workflows and autonomous response orchestration. The agent’s logic must be impeccable. A false positive that triggers an automated containment action at 27-second speed could be as disruptive as an actual breach.

The need for speed forces a parallel need for precision and explainable AI security. You have to know, and trust, why it acted so fast.

The New Attack Surface: 1,800+ AI Apps on Endpoints

There’s a second, quieter revolution happening inside the enterprise itself. CrowdStrike reported detecting over 1,800 distinct AI applications running on typical enterprise endpoints. Every department is adopting some AI tool for productivity, analysis, or creativity. This creates a sprawling, new attack surface.

These applications are often built on large language models, they have access to corporate data, and they communicate via APIs. They are, in a basic sense, agents themselves. An attacker doesn’t need to target the traditional security agent; they can target these productivity agents. They could manipulate a marketing AI to exfiltrate data, or corrupt a financial analysis model.

This means security’s scope has expanded. Agentic cybersecurity isn’t just about defending with AI agents, it’s also about securing the other AI agents already living in your environment. Your governance framework must encompass these tools, their data access, and their network permissions. The boundary of what you protect has grown more complex.

The Primary MSP Barrier: 51% Cite Governance & Compliance

For Managed Service Providers, the path to AI is blocked by a specific concern. According to a survey of 333 MSPs globally, 51% point to governance and compliance as their main barrier. This is higher than data security, value realization, or skills gaps. It makes sense. An MSP’s business is trust. They operate under stringent contracts and regulations.

Deploying an autonomous AI agent for a client adds a layer of liability and uncertainty. How do you report on its actions to the client? How do you guarantee its actions comply with the client’s industry regulations (HIPAA, GDPR, etc.)? How do you provide transparency? Without clear answers, an MSP risks its reputation and its contracts. They can’t move forward.

This barrier represents the single biggest consulting opportunity in the space. MSPs don’t need another vendor platform demo. They need a vendor-agnostic audit framework, a set of compliance controls for autonomous systems, and a clear model for agent accountability. Solving governance unlocks the market for them.

The Data Readiness Struggle: 94% Investing, 43% Mature

The foundational work for AI remains a significant hurdle. AvePoint and Omdia’s research (April 2026) shows that while 94% of MSPs are investing in automation to prepare their data for AI, only 43% have reached a state of high maturity. This gap speaks to the foundational work required. AI agents, especially those doing contextual threat analysis, need clean, structured, and accessible data. They can’t reason with chaos.

Many organizations find their data is siloed, inconsistently formatted, or laden with legacy noise. The investment is going into data lakes, normalization projects, and API-driven automation to stitch systems together. This is the unglamorous, critical plumbing work. Without it, the AI agent is like a brilliant analyst locked in a library with all the books in random languages, thrown on the floor. Its potential is useless.

| Data Readiness Stage | Key Characteristics | Impact on Agentic AI |

| Low Maturity | Siloed data, manual integration, inconsistent formatting. | Agents operate blind in isolated domains; cannot perform cross-domain pivoting. |

| Mid Maturity | Centralized data repository, basic automation, some normalization. | Agents can access data but reasoning is limited by quality issues; high false positives. |

| High Maturity | Unified, real-time data pipeline, automated enrichment, contextual tagging. | Agents can perform sophisticated correlation, predictive modeling, and reliable autonomous action. |

78% of MSPs Believe AI Will Shape 2026 Security Operations

The service provider community is fully committed to the AI transition. As reported in Seceon’s Global MSP & MSSP Security Operations Outlook (March 2026), 78% of MSPs believe that AI-driven security operations will be the defining force shaping their industry within this year. This isn’t a distant future prediction. It’s an immediate expectation.

This belief drives their investments and their anxieties. They are scrambling to understand how to offer AI-powered services, how to price them, and how to differentiate from competitors also jumping on the trend. The “how” remains murky, but the “why” is clear: the clients will demand it, the threats require it, and the efficiency gains promise a better business model. The pressure is on to move from belief to implementation.

The 2029 Forecast: 80% of Systems Will Have Context & Reasoning Layers

Gartner makes a strategic prediction that feels almost inevitable. By 2029, they say, 80% of preemptive cybersecurity systems will include context and reasoning layers. Today, that figure is below 10%. This is the architectural future.

A context and reasoning layer is what separates a simple automated script from an agentic AI. It’s the software that evaluates a threat alert against the business context, what system is affected, what data is on it, what the user’s role is, what the compliance requirements are, and then reasons about the appropriate response. It moves from “what” to “why” and then to “how.”

What this layer actually introduces into security operations is a shift in capability:

- Context-aware decision making — alerts are interpreted within business impact, not just technical severity

- Adaptive response logic — actions change based on environment, user role, and data sensitivity

- Cross-domain correlation — signals from endpoints, cloud, identity, and network are evaluated together

- Explainability and traceability — every decision can be audited, justified, and improved over time

- Policy-driven autonomy — responses align with governance frameworks, not just detection rules

This shift means buying a security tool in three years will inherently mean buying an AI agent. The market will standardize this. The distinction between “tool” and “agent” will quietly disappear as reasoning becomes a default layer, not a premium feature.

The real question for organizations isn’t whether this transition will happen. It’s whether they will build the governance and operational maturity to manage these systems before they become ubiquitous, or be forced to adapt under pressure.

The $276 Billion Partner Services Opportunity by 2030

Omdia forecasts the financial scale of this shift. The partner services opportunity around AI, including consulting, integration, management, and governance, will reach $276 billion by 2030. This isn’t the market for the AI software itself. This is the market for the human expertise required to make it work.

This number validates that the true value lies not in the agent, but in the ecosystem that supports it. MSSPs, integrators, and consultants who can bridge the pilot-to-production chasm, who can build the behavioral baselines vendors don’t supply, and who can create the compliant, auditable frameworks will capture this enormous wave. The technology creates the possibility, but the service unlocks the value.

FAQ

What is agentic cybersecurity in modern SOC environments?

Agentic cybersecurity refers to systems where SOC AI agents independently handle tasks like autonomous threat detection and real-time incident response. These systems rely on machine learning SOC models, contextual threat analysis, and self-learning security agents to improve over time. The goal is scalable SOC operations with minimal human oversight while maintaining accuracy, adaptability, and strong security operations automation across evolving threat landscapes.

How do SOC AI agents reduce alert fatigue effectively?

SOC AI agents reduce alert fatigue by using AI-driven alert enrichment, event correlation AI, and LLM-powered triage to prioritize meaningful threats. Through automated rule tuning and feedback loop optimization, they filter noise and highlight critical risks. This enables high-volume alert processing while supporting strategic analyst elevation, allowing human teams to focus on complex decisions instead of repetitive triage workflows.

How does autonomous threat detection actually work?

Autonomous threat detection uses behavioral anomaly detection, predictive threat intelligence, and statistical threat modeling to identify unusual patterns. Combined with threat prediction models and unified threat intelligence, systems continuously analyze data streams. These capabilities allow self-learning security agents to adapt in real-time, improving detection accuracy and enabling faster, proactive responses to emerging risks without constant manual input.

What role does AI play in incident response automation?

AI enables real-time incident response through autonomous response orchestration and API-driven automation. By integrating SIEM integration AI and SOAR platform enhancement, systems can act instantly on detected threats. Policy enforcement agents and endpoint detection automation help contain risks quickly, while cross-domain pivoting ensures comprehensive coverage across systems, improving both speed and consistency in handling security incidents.

How can organizations prepare for a hybrid human-AI SOC?

Organizations can prepare by focusing on readiness assessment SOC, risk tolerance modeling, and institutional knowledge integration. Building low-code security workflows and adopting hyperautomation SOC strategies supports smoother adoption. A hybrid human-AI SOC combines machine efficiency with human judgment, enabling better decision-making, improved workload scalability, and more resilient security operations in increasingly complex digital environments.

Building the SOC That Can Actually Use Its Agents

To actually unlock the value of agentic AI, you need more than tools, you need the right structure, guidance, and operational clarity. That’s where experienced, vendor-neutral support makes the difference. If you’re looking to move from pilot to production with confidence, explore how expert consulting can help you streamline your SOC, reduce tool sprawl, and build a governance framework that works in the real world.

With the right foundation in place, your team can safely scale automation, improve visibility, and turn AI agents from sidelined experiments into real operational advantage.