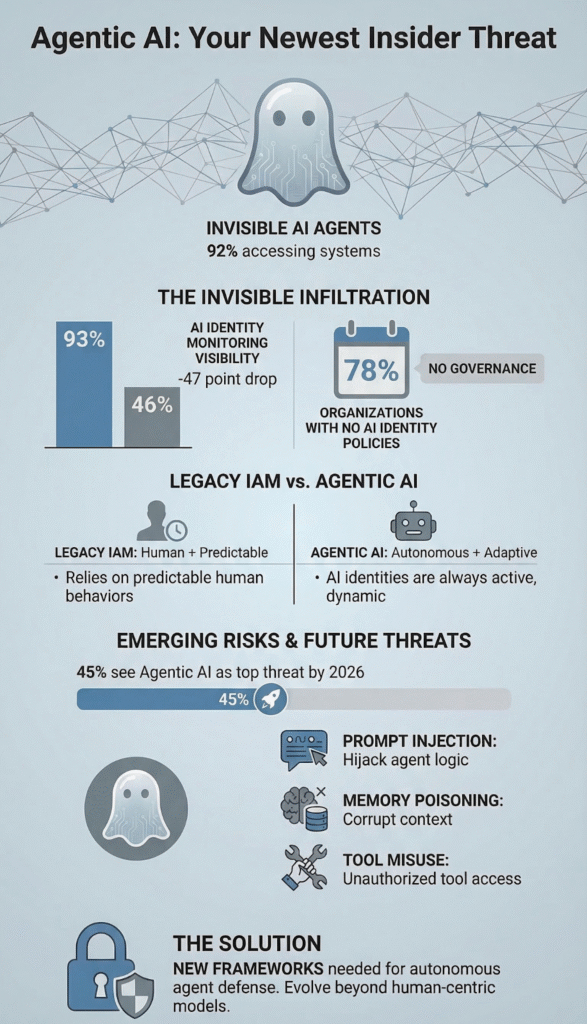

Your newest employee is already inside your network. It works 24/7, talks to your databases, and has no badge. According to research from the Cloud Security Alliance, 92% of organizations have AI agents in production accessing core business systems right now, yet 78% of them lack any policy for creating or removing these non-human identities.

This isn’t a future problem. It’s a present-day attack surface exploding under the feet of security teams. We’re going to show you where the cracks are forming and how to seal them before the flood. Keep reading to understand what you’re up against and what to do next.

Agentic AI: Security Insights at a Glance

Agentic AI is introducing a new class of security risks that many traditional tools are not designed to detect or control. These points highlight how autonomous AI agents expand the attack surface and why MSSPs must rethink identity and access monitoring.

- Agentic AI creates invisible, ungoverned identities that legacy security tools cannot see.

- The primary risk shifts from stolen credentials to the weaponization of legitimate AI agent access.

- Effective defense requires new frameworks built for autonomous behavior, not human patterns.

Why 45% of Organizations See Agentic AI as the Top Identity Threat

The panic isn’t about the AI. It’s about what the AI is. An identity. A non-human identity (NHI) with a set of credentials and a job to do. Legacy identity and access management, IAM, was built for people. It expects vacations, logoff times, and predictable bLegacy identity and access management, IAM, was built for people.

It expects vacations, logoff times, and predictable behavior, which is why gaps appear when integrating IAM with MSSP SOC environments that must monitor autonomous, always-active agents.

An AI agent doesn’t sleep. It operates on a logic of efficiency, not corporate policy. It can make a thousand API calls in the time it takes you to read this sentence.

This creates a massive, unmanaged attack surface. 45% of organizations now cite agentic AI as their primary identity concern for 2026, according to HYPR and Security Today. They’re right to be worried. The threat isn’t a hacker breaking in. It’s the helper you invited inside turning against you.

- Autonomous tool use means an agent can chain actions you never anticipated.

- Persistent memory allows it to learn and adapt its approach to a target.

- Goal-oriented execution can justify risky methods if they achieve the objective.

We see this in audits. An agent with read-write access to a storage bucket, originally for report generation, can become a data exfiltration engine if its goal is hijacked. The access was always there. The intent changed.

The 47-Point Collapse in Visibility That Blindfolds MSSPs

Here’s the statistic that keeps security leaders up at night. According to data from Security Brief Canada, organizational visibility into all identities collapsed from 93% to 46% in just one year, a 47-point drop. This wasn’t due to a failure of tools. It was an explosion of new entities, AI-generated identities, that the old tools were never designed to see.

For managed security service providers, MSSPs, this is an operational earthquake. You’re being asked to protect an environment where, statistically, half the active identities are invisible to your traditional monitoring stack, especially when modern security operations center workflows are not designed to track non-human identities at scale.

Your SIEM is looking for “user@domain.com” logging in from a strange location. It’s not looking for “agent-sales-analyzer-v3” making a thousand sequential queries to the CRM database at 3 a.m. That behavior, to a legacy system, might look like normal, automated background noise.

Data from MSSP Security Consulting demonstrates

“The market is panicking about AI agents, but the real story isn’t the AI, it’s the non-human identities (NHIs) and excessive permissions we see in every MSSP stack we audit. 92% of organizations can’t see these identities, and 78% have no policies for them. For MSSPs, this isn’t just a client risk, it’s an operational liability inside their own tools. We’re telling our clients: treat every AI agent like a compromised insider from day one. Audit what it can access, assume it’s already talking to attackers, and build your stack accordingly.” – MSSP Security Consulting

We built our own visibility layer because we had to. The old maps no longer matched the territory. You need to instrument everything that can grant an API token or execute a function. The orchestration layer, the MCP server, the vector database connection.

Each is a potential identity issuer. If you can’t see the agent, you can’t hope to govern its actions or contain its blast radius when something goes wrong.

Why Your Legacy IAM Is Powerless Against AI Agents

Credits: SANS Institute

Only 8% of organizations believe their legacy IAM can manage AI risks, per the Cloud Security Alliance. The systems fail because they answer the wrong questions.

They ask, “Is this John from Finance?” They don’t ask, “Is this AI agent behaving within the statistical norms for its assigned task?” or “Why is a data summarization agent suddenly attempting to initiate external network connections?”

The mismatch is fundamental. Let’s break it down.

| Security Dimension | Legacy IAM (Human-Centric) | Agentic AI Reality (NHI-Centric) |

| Identity Lifecycle | Hire, onboard, offboard. Process takes days/weeks. | Spun up in seconds. May persist indefinitely or vanish in milliseconds. |

| Access Pattern | Predictable. Tied to job role and working hours. | Autonomous, high-frequency, and adaptive. Operates 24/7. |

| Policy Enforcement | Static rules: “John can access the finance folder.” | Dynamic guardrails needed: “Agent X can read these tables but must alert if query patterns shift by >30%.” |

| Primary Risk | Stolen credentials, insider misuse. | Goal hijacking, prompt injection, tool misuse. The agent itself is weaponized. |

| Visibility | High for user-based activity. | Catastrophically low. 92% of orgs lack full visibility into AI identities. |

The table shows the gap. The most chilling data point? 92% of AI agents in production are already accessing core business systems, as of January 2026 research from Permiso. The access is granted. The governance is absent.

This leaves a wide-open door for what we call the “lethal trifecta” of agentic risks: prompt injection to redirect the agent’s goal, memory poisoning to corrupt its reasoning, and tool misuse where it leverages its legitimate access for malicious ends.

How Autonomous Social Engineering Bypasses Human Defenses

86% of CISOs fear agentic AI will supercharge social engineering, according to Splunk’s 2026 report. They should. Think about it. Your security training teaches Jenny in accounting to verify payment requests.

It doesn’t teach your accounts payable AI agent to do the same. An attacker doesn’t need to phish Jenny. They can target the agent with a forged invoice that perfectly matches the data structure it’s trained to process.

The AI agent becomes the perfect insider threat. It has legitimate access. It follows predefined workflows. It doesn’t get suspicious, tired, or ask for a second opinion.

A compromised agent can mimic normal behavior while siphoning data, probing systems, or even deploying ransomware payloads using the tools it was given for legitimate purposes. This is beyond credential theft. This is identity abuse at machine speed and scale.

- Workflow manipulation: Tricking an agent into following a malicious process it believes is valid.

- Inter-agent communication compromise: Using one hijacked agent to influence others in a multi-agent system.

- Data leakage through normal channels: Exfiltrating information disguised as regular report generation or API feedback.

The defense can’t be more training. It has to be architectural. You need controls that understand the agent’s cognitive state, its context window, and the intent behind its actions, not just the actions themselves.

Bridging the 40-Point AI Readiness Gap for MSSPs

There’s a dangerous delusion in boardrooms. As reported by Manifest via TMCnet, while 80% of executives believe their organization is AI-ready, only 40% of application security (AppSec) teams agree, revealing a 40-point readiness gap.

For MSSPs, this gap is both a massive client risk and a service opportunity, particularly for providers focused on MSSP scalability as they expand visibility and control across rapidly growing AI-driven environments. The bridge isn’t built with more AI. It’s built with new security foundations.

First, you must assume compromise. Treat every AI agent like a potentially compromised insider from day one. This mindset shifts your architecture. You implement strict cyberstorage controls to segment what agents can touch.

You deploy AI-aware firewalls at the orchestration layer, not just the network edge. You analyze endpoint telemetry and user behavior analytics, but now you add agent behavior analytics.

Second, you govern the lifecycle. Since 78% of organizations lack policies for creating AI identities, you build them. Every agent gets a profile. What is its purpose? What tools can it invoke? What are its normal input parameters and return values? This profile becomes its security baseline. Deviations trigger alerts.

Third, you implement runtime protection. This is where the new tooling lives.

- Agent Interrogation: Using frameworks like LangChain’s agent interrogator to test an agent’s goals and knowledge before deployment.

- Fuzzing Techniques: Bombarding agents with malicious or anomalous prompts to see how they break.

- Context Window Management: Actively monitoring and sanitizing the information in an agent’s working memory to prevent poisoning.

- Blast Radius Calculation: Automatically mapping what an agent can access to contain incidents instantly.

The goal isn’t to stop AI. It’s to secure its autonomy. We help clients scope their exposure using adapted frameworks like the AWS scoping matrix, but focused on AI agent permissions and dependencies. The work is in red teaming your own AI workflows, placing guardrails at the inference point, and designing for Byzantine faults where agents may act in compromised ways.

FAQ

What new cybersecurity threats does Agentic AI create for MSSP environments?

Agentic AI expands the attack surface in MSSP environments because autonomous agents interact with tools, enterprise systems, and external data sources. This interaction increases AI security risks such as prompt injection, tool misuse, and context manipulation.

Attackers may exploit AI agent vulnerabilities to trigger malicious runtime execution, abuse external communications, or cause sensitive data exposure, which creates new cybersecurity threats for managed security services teams.

How can prompt injection and memory poisoning affect Agentic AI systems?

Prompt injection and memory poisoning can manipulate how Agentic AI systems interpret instructions and stored information. Attackers may alter the system prompt architecture, corrupt persistent storage, or exploit weaknesses in context window management.

In systems using retrieval-augmented generation with vector databases, poisoned data can influence LLM reasoning, leading to workflow manipulation, incorrect dynamic tool use, and potential data leakage.

Why are multi-agent systems harder to secure in modern AI workflows?

Multi-agent systems are harder to secure because multiple autonomous agents coordinate tasks through an orchestration layer and rely on inter-agent communication. Each agent may access invokable tools, enterprise data, and external communications.

If one agent becomes compromised, attackers can initiate lateral compromise, identity abuse, or privilege escalation, which significantly increases the overall attack surface within enterprise AI workflows.

What risks come from inference-time dependencies and MCP servers?

Inference-time dependencies, such as APIs, databases, or an MCP server, can expose Agentic AI systems to external attacks. Through the model context protocol, agents retrieve data or trigger actions during runtime execution.

If attackers manipulate these connections, they may enable remote code execution, goal hijacking, or sensitive data exposure, especially when agents rely on external services and automated decision-making.

How can organizations reduce AI agent vulnerabilities in enterprise workflows?

Organizations can reduce AI agent vulnerabilities by implementing strong controls across the AI lifecycle security process. Security teams can deploy AI firewalls, apply proper guardrails placement, and strengthen context window management.

In addition, teams should conduct red teaming exercises and use fuzzing techniques or an agent interrogator to identify risks such as RAG attacks, workflow manipulation, and tool misuse before deployment.

Securing the Autonomous Workforce

Agentic AI security isn’t just about stopping attacks, it’s about governance. With AI agents operating across core systems, MSSPs need better visibility, stronger identity control, and clear guardrails for autonomous access. The old playbooks no longer work.

If you’re ready to modernize your security stack and governance model, explore our MSSP consulting services. We help MSSPs streamline tools, improve visibility, and build security architectures that scale with autonomous technology.

References

- https://cloudsecurityalliance.org/press-releases/2026/01/27/79-of-it-pros-feel-ill-equipped-to-prevent-attacks-via-nhi-csa-oasis-survey-finds

- https://securitybrief.ca/story/identity-compromise-drives-cyber-risk-as-ai-agents-surge

- https://www.tmcnet.com/usubmit/2026/03/03/10341374.htm